Recherche avancée

Autres articles (55)

-

Websites made with MediaSPIP

2 mai 2011, parThis page lists some websites based on MediaSPIP.

-

Gestion générale des documents

13 mai 2011, parMédiaSPIP ne modifie jamais le document original mis en ligne.

Pour chaque document mis en ligne il effectue deux opérations successives : la création d’une version supplémentaire qui peut être facilement consultée en ligne tout en laissant l’original téléchargeable dans le cas où le document original ne peut être lu dans un navigateur Internet ; la récupération des métadonnées du document original pour illustrer textuellement le fichier ;

Les tableaux ci-dessous expliquent ce que peut faire MédiaSPIP (...) -

Creating farms of unique websites

13 avril 2011, parMediaSPIP platforms can be installed as a farm, with a single "core" hosted on a dedicated server and used by multiple websites.

This allows (among other things) : implementation costs to be shared between several different projects / individuals rapid deployment of multiple unique sites creation of groups of like-minded sites, making it possible to browse media in a more controlled and selective environment than the major "open" (...)

Sur d’autres sites (5330)

-

FFMpeg : ffmpeg failed to execute command error

4 mars 2020, par Richard McFriend OluwamuyiwaI am trying to transcode a video file of 1.2mb file uploaded to my website server via php/html upload, but I keep getting the error :

PHP Fatal error :

Uncaught exception

’Alchemy\BinaryDriver\Exception\ExecutionFailureException’ with

message ’ffmpeg failed to execute command ’/usr/local/bin/ffmpeg’ ’-y’

’-i’

’/home/user/public_html/contents/videos/1490719990_MP4_360p_Short_video_clip_nature_mp4.mp4’

’-async’ ’1’ ’-metadata:s:v:0’ ’start_time=0’ ’-r’ ’16’ ’-b_strategy’

’1’ ’-bf’ ’3’ ’-g’ ’9’ ’-vcodec’ ’libx264’ ’-acodec’ ’libmp3lame’

’-b:v’ ’128k’ ’-refs’ ’6’ ’-coder’ ’1’ ’-sc_threshold’ ’40’ ’-flags’

’+loop’ ’-me_range’ ’16’ ’-subq’ ’7’ ’-i_qfactor’ ’0.71’ ’-qcomp’

’0.6’ ’-qdiff’ ’4’ ’-trellis’ ’1’ ’-b:a’ ’8k’ ’-ac’ ’1’ ’-pass’ ’1’

’-passlogfile’

’/tmp/ffmpeg-passes58dab05a323b6eknk4/pass-58dab05a32465’

’/home/user/public_html/contents/videos/1490719990_MP4_360p_Short_video_clip_nature_mp4_22995.mp4’’

in

/home/user/public_html/app/ffmpeg/vendor/alchemy/binary-driver/src/Alchemy/BinaryDriver/ProcessRunner.php:100Stack trace :

0 /home/user/public_html/app/ffmpeg/vendor/alchemy/binary-driver/src/Alchemy/BinaryDriver/ProcessRunner.php(72) :

Alch in

/home/user/public_html/app/ffmpeg/vendor/php-ffmpeg/php-ffmpeg/src/FFMpeg/Media/Video.php

on line 168Funny thing is that the same server is extracting frames and getting duration of the same file using

ffprobeandffmpeg.Here is the code I am using to transcode :

$ffmpeg = $ffmpeg = FFMpeg\FFMpeg::create(['timeout'=>3600, 'ffmpeg.thread'=>12, 'ffmpeg.binaries' => '/usr/local/bin/ffmpeg',

'ffprobe.binaries' => '/usr/local/bin/ffprobe']);

$ffprobe_prep = FFMpeg\FFProbe::create(['ffmpeg.binaries' => '/usr/local/bin/ffmpeg',

'ffprobe.binaries' => '/usr/local/bin/ffprobe']);

$ffprobe = $ffprobe_prep->format($video_file);

$video = $ffmpeg->open($video_file);

// Get video duration to ensure our videos are never longer than our video limit.

$duration = $ffprobe->get('duration');

// Use mp4 format and set the audio bitrate to 56Kbit and Mono channel.

// TODO: Try stereo later...

$format = new FFMpeg\Format\Video\X264('libmp3lame', 'libx264');

$format

-> setKiloBitrate(128)

-> setAudioChannels(1)

-> setAudioKiloBitrate(8);

$first = $ffprobe_prep

->streams($video_file)

->videos()

->first();

$width = $first->get('width');

if($width > VIDEO_WIDTH){

// Resize to 558 x 314 and resize to fit width.

$video

->filters()

->resize(new FFMpeg\Coordinate\Dimension(VIDEO_WIDTH, ceil(VIDEO_WIDTH / 16 * 9)));

}

// Trim to videos longer than three minutes to 3 minutes.

if($duration > MAX_VIDEO_PLAYTIME){

$video

->filters()

->clip(FFMpeg\Coordinate\TimeCode::fromSeconds(0), FFMpeg\Coordinate\TimeCode::fromSeconds(MAX_VIDEO_PLAYTIME));

}

// Change the framerate to 16fps and GOP as 9.

$video

->filters()

->framerate(new FFMpeg\Coordinate\FrameRate(16), 9);

// Synchronize audio and video

$video->filters()->synchronize();

$video->save($format, $video_file_new_2);I have contacted my host to no vital assistance. The only useful information they can provide me is that

ffmpegwas compiled on the server withlibmp3lamesupport.This code works perfect on localhost

Any help as to why I may be getting the error and how to correct it is appreciated.

-

VLC dead input for RTP stream

27 mars, par CaptainCheeseI'm working on creating an rtp stream that's meant to display live waveform data from Pioneer prolink players. The motivation for sending this video out is to be able to receive it in a flutter frontend. I initially was just sending a base-24 encoding of the raw ARGB packed ints per frame across a Kafka topic to it but processing this data in flutter proved to be untenable and was bogging down the main UI thread. Not sure if this is the most optimal way of going about this but just trying to get anything to work if it means some speedup on the frontend. So the issue the following implementation is experiencing is that when I run

vlc --rtsp-timeout=120000 --network-caching=30000 -vvvv stream_1.sdpwhere

% cat stream_1.sdp

v=0

o=- 0 1 IN IP4 127.0.0.1

s=RTP Stream

c=IN IP4 127.0.0.1

t=0 0

a=tool:libavformat

m=video 5007 RTP/AVP 96

a=rtpmap:96 H264/90000

I see (among other questionable logs) the following :

[0000000144c44d10] live555 demux error: no data received in 10s, aborting

[00000001430ee2f0] main input debug: EOF reached

[0000000144b160c0] main decoder debug: killing decoder fourcc `h264'

[0000000144b160c0] main decoder debug: removing module "videotoolbox"

[0000000144b164a0] main packetizer debug: removing module "h264"

[0000000144c44d10] main demux debug: removing module "live555"

[0000000144c45bb0] main stream debug: removing module "record"

[0000000144a64960] main stream debug: removing module "cache_read"

[0000000144c29c00] main stream debug: removing module "filesystem"

[00000001430ee2f0] main input debug: Program doesn't contain anymore ES

[0000000144806260] main playlist debug: dead input

[0000000144806260] main playlist debug: changing item without a request (current 0/1)

[0000000144806260] main playlist debug: nothing to play

[0000000142e083c0] macosx interface debug: Playback has been ended

[0000000142e083c0] macosx interface debug: Releasing IOKit system sleep blocker (37463)

This is sort of confusing because when I run

ffmpeg -protocol_whitelist file,crypto,data,rtp,udp -i stream_1.sdp -vcodec libx264 -f null -

I see a number logs about

[h264 @ 0x139304080] non-existing PPS 0 referenced

Last message repeated 1 times

[h264 @ 0x139304080] decode_slice_header error

[h264 @ 0x139304080] no frame!

After which I see the stream is received and I start getting telemetry on it :

Input #0, sdp, from 'stream_1.sdp':

Metadata:

title : RTP Stream

Duration: N/A, start: 0.016667, bitrate: N/A

Stream #0:0: Video: h264 (Constrained Baseline), yuv420p(progressive), 1200x200, 60 fps, 60 tbr, 90k tbn

Stream mapping:

Stream #0:0 -> #0:0 (h264 (native) -> h264 (libx264))

Press [q] to stop, [?] for help

[libx264 @ 0x107f04f40] using cpu capabilities: ARMv8 NEON

[libx264 @ 0x107f04f40] profile High, level 3.1, 4:2:0, 8-bit

Output #0, null, to 'pipe:':

Metadata:

title : RTP Stream

encoder : Lavf61.7.100

Stream #0:0: Video: h264, yuv420p(tv, progressive), 1200x200, q=2-31, 60 fps, 60 tbn

Metadata:

encoder : Lavc61.19.101 libx264

Side data:

cpb: bitrate max/min/avg: 0/0/0 buffer size: 0 vbv_delay: N/A

[out#0/null @ 0x60000069c000] video:144KiB audio:0KiB subtitle:0KiB other streams:0KiB global headers:0KiB muxing overhead: unknown

frame= 1404 fps= 49 q=-1.0 Lsize=N/A time=00:00:23.88 bitrate=N/A speed=0.834x

Not sure why VLC is turning me down like some kind of Berghain bouncer that lets nobody in the entire night.

I initially tried just converting the ARGB ints to a YUV420p buffer and used this to create the Frame objects but I couldn't for the life of me figure out how to properly initialize it as the attempts I made kept spitting out garbled junk.

Please go easy on me, I've made an unhealthy habit of resolving nearly all of my coding questions by simply lurking the internet for answers but that's not really helping me solve this issue.

Here's the Java I'm working on (the meat of the rtp comms occurs within

updateWaveformForPlayer()) :

package com.bugbytz.prolink;

import org.apache.kafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.Producer;

import org.apache.kafka.clients.producer.ProducerConfig;

import org.apache.kafka.clients.producer.ProducerRecord;

import org.bytedeco.ffmpeg.global.avcodec;

import org.bytedeco.ffmpeg.global.avutil;

import org.bytedeco.javacv.FFmpegFrameGrabber;

import org.bytedeco.javacv.FFmpegFrameRecorder;

import org.bytedeco.javacv.FFmpegLogCallback;

import org.bytedeco.javacv.Frame;

import org.bytedeco.javacv.FrameGrabber;

import org.deepsymmetry.beatlink.CdjStatus;

import org.deepsymmetry.beatlink.DeviceAnnouncement;

import org.deepsymmetry.beatlink.DeviceAnnouncementAdapter;

import org.deepsymmetry.beatlink.DeviceFinder;

import org.deepsymmetry.beatlink.Util;

import org.deepsymmetry.beatlink.VirtualCdj;

import org.deepsymmetry.beatlink.data.BeatGridFinder;

import org.deepsymmetry.beatlink.data.CrateDigger;

import org.deepsymmetry.beatlink.data.MetadataFinder;

import org.deepsymmetry.beatlink.data.TimeFinder;

import org.deepsymmetry.beatlink.data.WaveformDetail;

import org.deepsymmetry.beatlink.data.WaveformDetailComponent;

import org.deepsymmetry.beatlink.data.WaveformFinder;

import java.awt.*;

import java.awt.image.BufferedImage;

import java.io.File;

import java.nio.ByteBuffer;

import java.text.DecimalFormat;

import java.util.ArrayList;

import java.util.HashMap;

import java.util.HashSet;

import java.util.Map;

import java.util.Properties;

import java.util.Set;

import java.util.concurrent.ExecutionException;

import java.util.concurrent.Executors;

import java.util.concurrent.ScheduledExecutorService;

import java.util.concurrent.ScheduledFuture;

import java.util.concurrent.TimeUnit;

import static org.bytedeco.ffmpeg.global.avutil.AV_PIX_FMT_RGB24;

public class App {

public static ArrayList<track> tracks = new ArrayList<>();

public static boolean dbRead = false;

public static Properties props = new Properties();

private static Map recorders = new HashMap<>();

private static Map frameCount = new HashMap<>();

private static final ScheduledExecutorService scheduler = Executors.newScheduledThreadPool(1);

private static final int FPS = 60;

private static final int FRAME_INTERVAL_MS = 1000 / FPS;

private static Map schedules = new HashMap<>();

private static Set<integer> streamingPlayers = new HashSet<>();

public static String byteArrayToMacString(byte[] macBytes) {

StringBuilder sb = new StringBuilder();

for (int i = 0; i < macBytes.length; i++) {

sb.append(String.format("%02X%s", macBytes[i], (i < macBytes.length - 1) ? ":" : ""));

}

return sb.toString();

}

private static void updateWaveformForPlayer(int player) throws Exception {

Integer frame_for_player = frameCount.get(player);

if (frame_for_player == null) {

frame_for_player = 0;

frameCount.putIfAbsent(player, frame_for_player);

}

if (!WaveformFinder.getInstance().isRunning()) {

WaveformFinder.getInstance().start();

}

WaveformDetail detail = WaveformFinder.getInstance().getLatestDetailFor(player);

if (detail != null) {

WaveformDetailComponent component = (WaveformDetailComponent) detail.createViewComponent(

MetadataFinder.getInstance().getLatestMetadataFor(player),

BeatGridFinder.getInstance().getLatestBeatGridFor(player)

);

component.setMonitoredPlayer(player);

component.setPlaybackState(player, TimeFinder.getInstance().getTimeFor(player), true);

component.setAutoScroll(true);

int width = 1200;

int height = 200;

Dimension dimension = new Dimension(width, height);

component.setPreferredSize(dimension);

component.setSize(dimension);

component.setScale(1);

component.doLayout();

// Create a fresh BufferedImage and clear it before rendering

BufferedImage image = new BufferedImage(width, height, BufferedImage.TYPE_INT_RGB);

Graphics2D g = image.createGraphics();

g.clearRect(0, 0, width, height); // Clear any old content

// Draw waveform into the BufferedImage

component.paint(g);

g.dispose();

int port = 5004 + player;

String inputFile = port + "_" + frame_for_player + ".mp4";

// Initialize the FFmpegFrameRecorder for YUV420P

FFmpegFrameRecorder recorder_file = new FFmpegFrameRecorder(inputFile, width, height);

FFmpegLogCallback.set(); // Enable FFmpeg logging for debugging

recorder_file.setFormat("mp4");

recorder_file.setVideoCodec(avcodec.AV_CODEC_ID_H264);

recorder_file.setPixelFormat(avutil.AV_PIX_FMT_YUV420P); // Use YUV420P format directly

recorder_file.setFrameRate(FPS);

// Set video options

recorder_file.setVideoOption("preset", "ultrafast");

recorder_file.setVideoOption("tune", "zerolatency");

recorder_file.setVideoOption("x264-params", "repeat-headers=1");

recorder_file.setGopSize(FPS);

try {

recorder_file.start(); // Ensure this is called before recording any frames

System.out.println("Recorder started successfully for player: " + player);

} catch (org.bytedeco.javacv.FFmpegFrameRecorder.Exception e) {

e.printStackTrace();

}

// Get all pixels in one call

int[] pixels = new int[width * height];

image.getRGB(0, 0, width, height, pixels, 0, width);

recorder_file.recordImage(width,height,Frame.DEPTH_UBYTE,1,3 * width, AV_PIX_FMT_RGB24, ByteBuffer.wrap(argbToByteArray(pixels, width, height)));

recorder_file.stop();

recorder_file.release();

final FFmpegFrameRecorder recorder = recorders.get(player);

FFmpegFrameGrabber grabber = new FFmpegFrameGrabber(inputFile);

try {

grabber.start();

} catch (Exception e) {

e.printStackTrace();

}

if (recorder == null) {

try {

String outputStream = "rtp://127.0.0.1:" + port;

FFmpegFrameRecorder initial_recorder = new FFmpegFrameRecorder(outputStream, grabber.getImageWidth(), grabber.getImageHeight());

initial_recorder.setFormat("rtp");

initial_recorder.setVideoCodec(avcodec.AV_CODEC_ID_H264);

initial_recorder.setPixelFormat(avutil.AV_PIX_FMT_YUV420P);

initial_recorder.setFrameRate(grabber.getFrameRate());

initial_recorder.setGopSize(FPS);

initial_recorder.setVideoOption("x264-params", "keyint=60");

initial_recorder.setVideoOption("rtsp_transport", "tcp");

initial_recorder.start();

recorders.putIfAbsent(player, initial_recorder);

frameCount.putIfAbsent(player, 0);

putToRTP(player, grabber, initial_recorder);

}

catch (Exception e) {

e.printStackTrace();

}

}

else {

putToRTP(player, grabber, recorder);

}

File file = new File(inputFile);

if (file.exists() && file.delete()) {

System.out.println("Successfully deleted file: " + inputFile);

} else {

System.out.println("Failed to delete file: " + inputFile);

}

}

}

public static void putToRTP(int player, FFmpegFrameGrabber grabber, FFmpegFrameRecorder recorder) throws FrameGrabber.Exception {

final Frame frame = grabber.grabFrame();

int frameCount_local = frameCount.get(player);

frame.keyFrame = frameCount_local++ % FPS == 0;

frameCount.put(player, frameCount_local);

try {

recorder.record(frame);

} catch (FFmpegFrameRecorder.Exception e) {

throw new RuntimeException(e);

}

}

public static byte[] argbToByteArray(int[] argb, int width, int height) {

int totalPixels = width * height;

byte[] byteArray = new byte[totalPixels * 3]; // 4 bytes per pixel (ARGB)

for (int i = 0; i < totalPixels; i++) {

int argbPixel = argb[i];

byteArray[i * 3] = (byte) ((argbPixel >> 16) & 0xFF); // Red

byteArray[i * 3 + 1] = (byte) ((argbPixel >> 8) & 0xFF); // Green

byteArray[i * 3 + 2] = (byte) (argbPixel & 0xFF); // Blue

}

return byteArray;

}

public static void main(String[] args) throws Exception {

VirtualCdj.getInstance().setDeviceNumber((byte) 4);

CrateDigger.getInstance().addDatabaseListener(new DBService());

props.put("bootstrap.servers", "localhost:9092");

props.put("key.serializer", "org.apache.kafka.common.serialization.StringSerializer");

props.put("value.serializer", "com.bugbytz.prolink.CustomSerializer");

props.put(ProducerConfig.MAX_REQUEST_SIZE_CONFIG, "20971520");

VirtualCdj.getInstance().addUpdateListener(update -> {

if (update instanceof CdjStatus) {

try (Producer producer = new KafkaProducer<>(props)) {

DecimalFormat df_obj = new DecimalFormat("#.##");

DeviceStatus deviceStatus = new DeviceStatus(

update.getDeviceNumber(),

((CdjStatus) update).isPlaying() || !((CdjStatus) update).isPaused(),

((CdjStatus) update).getBeatNumber(),

update.getBeatWithinBar(),

Double.parseDouble(df_obj.format(update.getEffectiveTempo())),

Double.parseDouble(df_obj.format(Util.pitchToPercentage(update.getPitch()))),

update.getAddress().getHostAddress(),

byteArrayToMacString(DeviceFinder.getInstance().getLatestAnnouncementFrom(update.getDeviceNumber()).getHardwareAddress()),

((CdjStatus) update).getRekordboxId(),

update.getDeviceName()

);

ProducerRecord record = new ProducerRecord<>("device-status", "device-" + update.getDeviceNumber(), deviceStatus);

try {

producer.send(record).get();

} catch (InterruptedException ex) {

throw new RuntimeException(ex);

} catch (ExecutionException ex) {

throw new RuntimeException(ex);

}

producer.flush();

if (!WaveformFinder.getInstance().isRunning()) {

try {

WaveformFinder.getInstance().start();

} catch (Exception ex) {

throw new RuntimeException(ex);

}

}

}

}

});

DeviceFinder.getInstance().addDeviceAnnouncementListener(new DeviceAnnouncementAdapter() {

@Override

public void deviceFound(DeviceAnnouncement announcement) {

if (!streamingPlayers.contains(announcement.getDeviceNumber())) {

streamingPlayers.add(announcement.getDeviceNumber());

schedules.putIfAbsent(announcement.getDeviceNumber(), scheduler.scheduleAtFixedRate(() -> {

try {

Runnable task = () -> {

try {

updateWaveformForPlayer(announcement.getDeviceNumber());

} catch (InterruptedException e) {

System.out.println("Thread interrupted");

} catch (Exception e) {

throw new RuntimeException(e);

}

System.out.println("Lambda thread work completed!");

};

task.run();

} catch (Exception e) {

e.printStackTrace();

}

}, 0, FRAME_INTERVAL_MS, TimeUnit.MILLISECONDS));

}

}

@Override

public void deviceLost(DeviceAnnouncement announcement) {

if (streamingPlayers.contains(announcement.getDeviceNumber())) {

schedules.get(announcement.getDeviceNumber()).cancel(true);

streamingPlayers.remove(announcement.getDeviceNumber());

}

}

});

BeatGridFinder.getInstance().start();

MetadataFinder.getInstance().start();

VirtualCdj.getInstance().start();

TimeFinder.getInstance().start();

DeviceFinder.getInstance().start();

CrateDigger.getInstance().start();

try {

LoadCommandConsumer consumer = new LoadCommandConsumer("localhost:9092", "load-command-group");

Thread consumerThread = new Thread(consumer::startConsuming);

consumerThread.start();

Runtime.getRuntime().addShutdownHook(new Thread(() -> {

consumer.shutdown();

try {

consumerThread.join();

} catch (InterruptedException e) {

Thread.currentThread().interrupt();

}

}));

Thread.sleep(60000);

} catch (InterruptedException e) {

System.out.println("Interrupted, exiting.");

}

}

}

</integer></track>

-

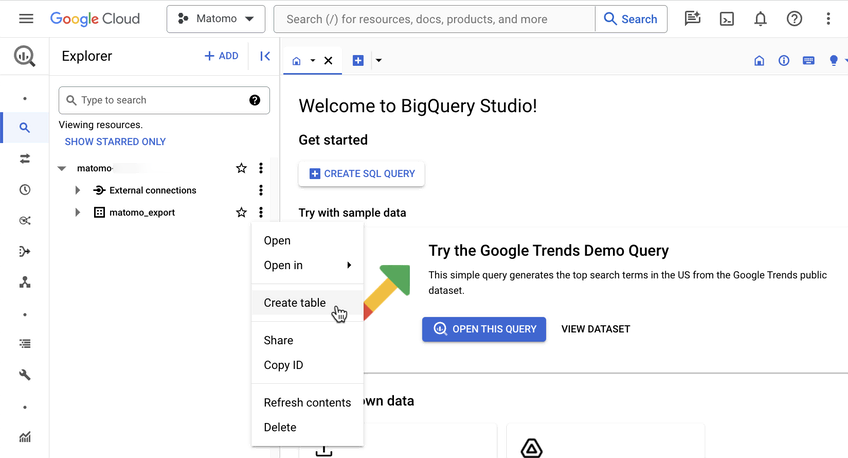

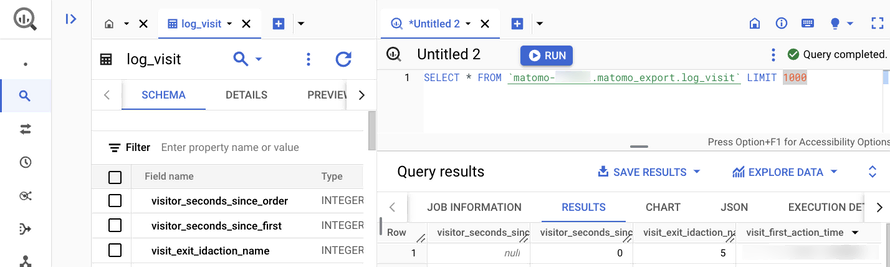

Introducing the BigQuery & Data Warehouse Export feature

30 janvier, par Matomo Core Team