Recherche avancée

Autres articles (111)

-

Ajouter des informations spécifiques aux utilisateurs et autres modifications de comportement liées aux auteurs

12 avril 2011, parLa manière la plus simple d’ajouter des informations aux auteurs est d’installer le plugin Inscription3. Il permet également de modifier certains comportements liés aux utilisateurs (référez-vous à sa documentation pour plus d’informations).

Il est également possible d’ajouter des champs aux auteurs en installant les plugins champs extras 2 et Interface pour champs extras. -

La sauvegarde automatique de canaux SPIP

1er avril 2010, parDans le cadre de la mise en place d’une plateforme ouverte, il est important pour les hébergeurs de pouvoir disposer de sauvegardes assez régulières pour parer à tout problème éventuel.

Pour réaliser cette tâche on se base sur deux plugins SPIP : Saveauto qui permet une sauvegarde régulière de la base de donnée sous la forme d’un dump mysql (utilisable dans phpmyadmin) mes_fichiers_2 qui permet de réaliser une archive au format zip des données importantes du site (les documents, les éléments (...) -

Utilisation et configuration du script

19 janvier 2011, parInformations spécifiques à la distribution Debian

Si vous utilisez cette distribution, vous devrez activer les dépôts "debian-multimedia" comme expliqué ici :

Depuis la version 0.3.1 du script, le dépôt peut être automatiquement activé à la suite d’une question.

Récupération du script

Le script d’installation peut être récupéré de deux manières différentes.

Via svn en utilisant la commande pour récupérer le code source à jour :

svn co (...)

Sur d’autres sites (7317)

-

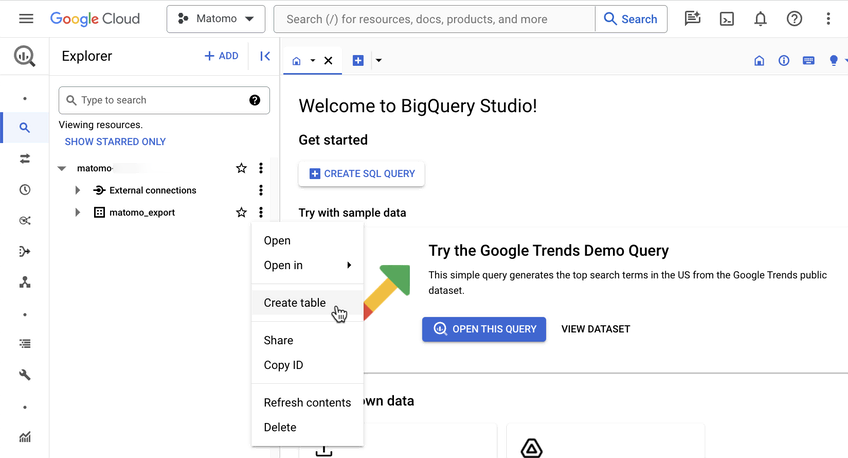

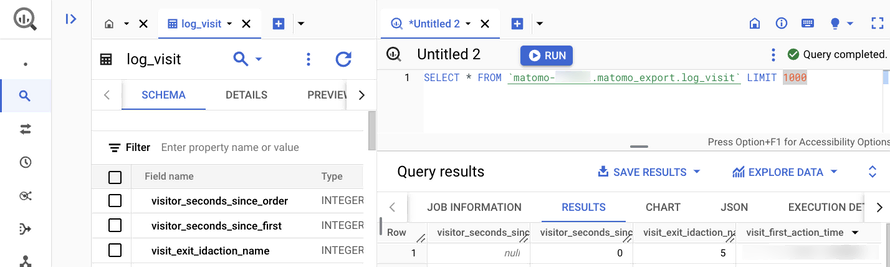

Introducing the Data Warehouse Connector feature

30 janvier, par Matomo Core Team -

Matomo Launches Global Partner Programme to Deepen Local Connections and Champion Ethical Analytics

25 juin, par Matomo Core Team — Press Releases -

How to obtain time markers for video splitting using python/OpenCV

30 mars 2016, par Bleddyn Raw-ReesHi I’m new to the world of programming and computer vision so please bare with me.

I’m working on my MSc project which is researching automated deletion of low value content in digital file stores. I’m specifically looking at the sort of long shots that often occur in natural history filming whereby a static camera is left rolling in order to capture the rare snow leopard or whatever. These shots may only have some 60s of useful content with perhaps several hours of worthless content either side.

As a first step I have a simple motion detection program from Adrian Rosebrock’s tutorial [http://www.pyimagesearch.com/2015/05/25/basic-motion-detection-and-tracking-with-python-and-opencv/#comment-393376]. Next I intend to use FFMPEG to split the video.

What I would like help with is how to get in and out points based on the first and last points that motion is detected in the video.

Here is the code should you wish to see it...

# import the necessary packages

import argparse

import datetime

import imutils

import time

import cv2

# construct the argument parser and parse the arguments

ap = argparse.ArgumentParser()

ap.add_argument("-v", "--video", help="path to the video file")

ap.add_argument("-a", "--min-area", type=int, default=500, help="minimum area size")

args = vars(ap.parse_args())

# if the video argument is None, then we are reading from webcam

if args.get("video", None) is None:

camera = cv2.VideoCapture(0)

time.sleep(0.25)

# otherwise, we are reading from a video file

else:

camera = cv2.VideoCapture(args["video"])

# initialize the first frame in the video stream

firstFrame = None

# loop over the frames of the video

while True:

# grab the current frame and initialize the occupied/unoccupied

# text

(grabbed, frame) = camera.read()

text = "Unoccupied"

# if the frame could not be grabbed, then we have reached the end

# of the video

if not grabbed:

break

# resize the frame, convert it to grayscale, and blur it

frame = imutils.resize(frame, width=500)

gray = cv2.cvtColor(frame, cv2.COLOR_BGR2GRAY)

gray = cv2.GaussianBlur(gray, (21, 21), 0)

# if the first frame is None, initialize it

if firstFrame is None:

firstFrame = gray

continue

# compute the absolute difference between the current frame and

# first frame

frameDelta = cv2.absdiff(firstFrame, gray)

thresh = cv2.threshold(frameDelta, 25, 255, cv2.THRESH_BINARY)[1]

# dilate the thresholded image to fill in holes, then find contours

# on thresholded image

thresh = cv2.dilate(thresh, None, iterations=2)

(_, cnts, _) = cv2.findContours(thresh.copy(), cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE)

# loop over the contours

for c in cnts:

# if the contour is too small, ignore it

if cv2.contourArea(c) < args["min_area"]:

continue

# compute the bounding box for the contour, draw it on the frame,

# and update the text

(x, y, w, h) = cv2.boundingRect(c)

cv2.rectangle(frame, (x, y), (x + w, y + h), (0, 255, 0), 2)

text = "Occupied"

# draw the text and timestamp on the frame

cv2.putText(frame, "Room Status: {}".format(text), (10, 20),

cv2.FONT_HERSHEY_SIMPLEX, 0.5, (0, 0, 255), 2)

cv2.putText(frame, datetime.datetime.now().strftime("%A %d %B %Y %I:%M:%S%p"),

(10, frame.shape[0] - 10), cv2.FONT_HERSHEY_SIMPLEX, 0.35, (0, 0, 255), 1)

# show the frame and record if the user presses a key

cv2.imshow("Security Feed", frame)

cv2.imshow("Thresh", thresh)

cv2.imshow("Frame Delta", frameDelta)

key = cv2.waitKey(1) & 0xFF

# if the `q` key is pressed, break from the lop

if key == ord("q"):

break

# cleanup the camera and close any open windows

camera.release()

cv2.destroyAllWindows()Thanks !