Recherche avancée

Médias (91)

-

Valkaama DVD Cover Outside

4 octobre 2011, par

Mis à jour : Octobre 2011

Langue : English

Type : Image

-

Valkaama DVD Label

4 octobre 2011, par

Mis à jour : Février 2013

Langue : English

Type : Image

-

Valkaama DVD Cover Inside

4 octobre 2011, par

Mis à jour : Octobre 2011

Langue : English

Type : Image

-

1,000,000

27 septembre 2011, par

Mis à jour : Septembre 2011

Langue : English

Type : Audio

-

Demon Seed

26 septembre 2011, par

Mis à jour : Septembre 2011

Langue : English

Type : Audio

-

The Four of Us are Dying

26 septembre 2011, par

Mis à jour : Septembre 2011

Langue : English

Type : Audio

Autres articles (97)

-

MediaSPIP 0.1 Beta version

25 avril 2011, parMediaSPIP 0.1 beta is the first version of MediaSPIP proclaimed as "usable".

The zip file provided here only contains the sources of MediaSPIP in its standalone version.

To get a working installation, you must manually install all-software dependencies on the server.

If you want to use this archive for an installation in "farm mode", you will also need to proceed to other manual (...) -

HTML5 audio and video support

13 avril 2011, parMediaSPIP uses HTML5 video and audio tags to play multimedia files, taking advantage of the latest W3C innovations supported by modern browsers.

The MediaSPIP player used has been created specifically for MediaSPIP and can be easily adapted to fit in with a specific theme.

For older browsers the Flowplayer flash fallback is used.

MediaSPIP allows for media playback on major mobile platforms with the above (...) -

ANNEXE : Les plugins utilisés spécifiquement pour la ferme

5 mars 2010, parLe site central/maître de la ferme a besoin d’utiliser plusieurs plugins supplémentaires vis à vis des canaux pour son bon fonctionnement. le plugin Gestion de la mutualisation ; le plugin inscription3 pour gérer les inscriptions et les demandes de création d’instance de mutualisation dès l’inscription des utilisateurs ; le plugin verifier qui fournit une API de vérification des champs (utilisé par inscription3) ; le plugin champs extras v2 nécessité par inscription3 (...)

Sur d’autres sites (8308)

-

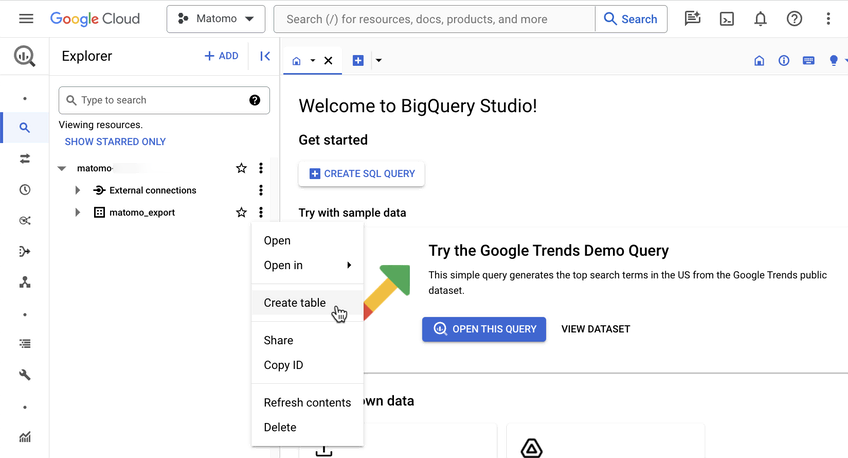

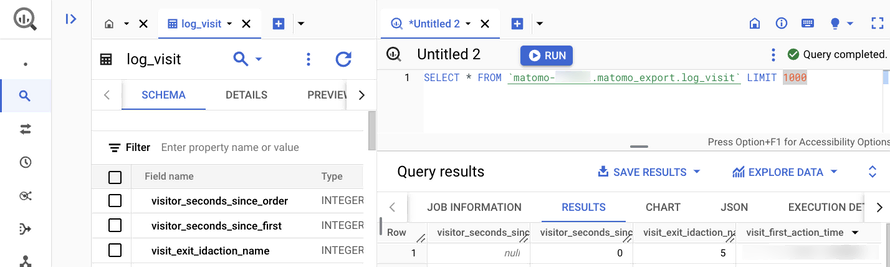

Introducing the Data Warehouse Connector feature

30 janvier, par Matomo Core Team -

configure : update copyright year

1er janvier, par Lynne -

Unwrapping Matomo 5.2.0 – Bringing you enhanced security and performance

25 décembre 2024, par Daniel Crough — Latest Releases